| |

|

| |

| |

| |

|

A daily bite-size selection of top business content. |

| |

| |

| |

Quote: Jensen Huang - Nvidia CEO"I think we've just reinvented the computer." - Jensen Huang - Nvidia CEOIn a profound reflection on the evolution of computing, NVIDIA CEO Jensen Huang articulated a paradigm shift during his interview on the Lex Fridman Podcast #494, stating, "I think we've just reinvented the computer." This remark, made in the context of advanced AI systems, underscores how modern computing has transitioned from mere data retrieval to generative intelligence capable of research, tool usage, and synthetic data creation.1,2 Context of the QuoteHuang's statement emerged from a discussion on the architecture of future AI agents. He reasoned that these systems require access to ground truth data via file systems, the ability to conduct research, and integration with input/output subsystems and tools. This holistic view reveals computing's "deeply profound" implications, marking a reinvention where AI evolves beyond passive storage into active, context-aware generation.2 Delivered on 23 March 2026, amid NVIDIA's ascent to a $4 trillion valuation, the quote captures the explosive growth of the 'token economy' - where AI produces 'token goods' like generated text, images, and code, turning computers from cost centres (akin to unprofitable warehouses) into revenue-generating factories.1 Backstory on Jensen HuangBorn in Taiwan in 1963, Jensen Huang co-founded NVIDIA in 1993 with Chris Malachowsky and Curtis Priem, initially focusing on graphics processing units (GPUs) for gaming and visualisation. Facing near-bankruptcy in the late 1990s, Huang pivoted NVIDIA towards programmable shaders and IEEE-compliant FP32 floating-point precision, enabling GPUs for general-purpose computing.2 The launch of CUDA in 2006 democratised this power, placing supercomputing capabilities in researchers' hands via PCs, outpacing rivals like OpenCL due to NVIDIA's massive install base.2 Under Huang's leadership, NVIDIA dominated AI hardware, powering breakthroughs from deep learning to large language models. By 2026, as CEO of the world's most valuable company, Huang envisions computing's GDP share surging 100-fold, with AI achieving artificial general intelligence (AGI) today - defined as systems autonomously building profitable applications.1,2 Leading Theorists in AI and Computing ReinventionJohn McCarthy (1927-2011): Coined 'artificial intelligence' in 1956 at the Dartmouth Conference, pioneering Lisp and time-sharing systems. His vision of machines reasoning like humans laid foundational theory for AI's shift from rule-based to generative paradigms.2 Geoffrey Hinton: 'Godfather of deep learning', Hinton's backpropagation and neural network research in the 1980s, revitalised in 2012 via AlexNet (powered by NVIDIA GPUs), enabled the scaled training underpinning today's token-generating models.1 Yann LeCun and Yoshua Bengio: With Hinton, the 'three musketeers' of AI advanced convolutional networks and generative adversarial networks (GANs), theorising self-supervised learning that allows AI to synthesise data - echoing Huang's 'token factory'.1 Ilya Sutskever: Co-founder of OpenAI, his work on sequence transduction and reinforcement learning from human feedback (RLHF) birthed models like GPT, which Huang sees as reinventing computing through tool-augmented agency.2 These theorists' ideas converged with NVIDIA's hardware, propelling Huang's prophecy: every profession - from carpenters to plumbers - will programme via natural language, expanding coders from 30 million to 1 billion.1 Implications for the AI RevolutionHuang predicts AI disruption for task-based roles but empowerment for purpose-driven innovators. Power challenges will be met with 'elegant degradation' data centres utilising grid redundancies. As barriers to AI entry drop to zero - simply ask, 'How do I use you?' - the reinvention promises unprecedented productivity, with trillion-dollar companies commonplace.1 References 2. https://lexfridman.com/jensen-huang-transcript/ 3. https://www.youtube.com/watch?v=VWkSgbUkkh8 4. https://lexfridman.com/category/transcripts/ 5. https://guardianbookshop.com/the-thinking-machine-9781847928276/ 6. https://exclusivebooks.co.za/collections/new?page=79

|

| |

| |

Quote: FundaAI"TurboQuant is not another DeepSeek moment." - FundaAIThe quote “TurboQuant is not another DeepSeek moment” (FundaAI, 26 March 2026) captures a specific market misreading that erupted after Google re-published its TurboQuant blog on 24 March 2026. Core meaning of the quote

Why the distinction matters (first-principles view)Thus the “linear extrapolation” that a 6× KV-cache reduction ~ 6× lower total memory demand is wrong. Technical snapshot of TurboQuant

Market reaction that sparked the quote

Why FundaAI calls it “not another DeepSeek moment”

Bottom lineThe market’s panic was a category error: conflating temporary inference cache with total model memory. TurboQuant is a pure throughput/context-length optimizer that lets existing HBM serve more concurrent users or longer contexts, but it does not compress the LLM itself. Therefore, it should not be modeled as a structural demand-destruciton event for HBM/DRAM/SSD—unlike the genuine “DeepSeek moment” that altered compute-per-token economics across training and inference. |

| |

| |

Term: Multiple on Invested Capital (MOIC)"Multiple on Invested Capital (MOIC) measures the total value returned from an investment relative to the total equity capital invested, expressed as a simple multiple (e.g., 2.5×). In private equity, MOIC captures the absolute value creation of an investment without regard to the time taken to achieve it." - Multiple on Invested Capital (MOIC)The Multiple on Invested Capital (MOIC) is a financial metric that measures the total value returned from an investment relative to the total equity capital invested, expressed as a simple multiple (for example, 2.5×).1 In private equity, MOIC captures the absolute value creation of an investment without regard to the time taken to achieve it, making it one of the most commonly used performance indicators across the industry.3 Core Definition and CalculationMOIC answers a fundamental question: how many times has the initial capital been multiplied?3 The metric is calculated using a straightforward formula: \text = \frac{\text}{\text} Alternatively expressed as: \text = \frac{\text}{\text} The total value of investment includes all cash received from the investment-such as dividends, profits, and eventual sale proceeds-as well as unrealised gains, which represent the potential future value of the investment if sold at current market rates.8 Practical ExamplesA MOIC value of 2.0× indicates that a private equity fund has doubled its original investment.3 If a fund invested £1 million and received £3 million from the investment, the fund would have a MOIC of 3.0×.5 In a more substantial scenario, if a private equity fund invests £100 million in a company and realises £500 million in total value (both realised and unrealised), the MOIC would be 5.0×, indicating a fivefold return on the initial capital.8 Key Characteristics and AdvantagesMOIC provides several distinct advantages as a performance metric:

MOIC in Context: Related MetricsWhilst MOIC is excellent for quickly assessing investment success, it is typically calculated alongside other performance metrics to provide a more holistic understanding:3

Interpreting MOIC PerformanceA higher MOIC is perceived positively because it implies that investments are profitable and have generated substantial value.4 Conversely, a lower MOIC is viewed negatively, as it indicates that the investment may be unprofitable and investors risk not receiving their target return or even recouping their initial capital.4 In private equity practice, a MOIC of 2.0× or above is generally considered a strong outcome, though expectations vary by fund strategy and market conditions. Terminology and VariationsThe term MOIC is interchangeable with several other expressions commonly used in investment circles:4 "Multiple on Money" (MoM) and "cash-on-cash return" are synonymous terms that describe the same metric. This terminology consistency reflects the widespread adoption of MOIC across venture capital, private equity, and hedge fund sectors.6 David Rubenstein and the Professionalisation of Private Equity MetricsThe systematic use of MOIC and other standardised performance metrics in private equity owes much to David Rubenstein, co-founder of The Carlyle Group, who has been instrumental in professionalising the private equity industry since the 1980s. Rubenstein recognised that private equity required transparent, comparable metrics to attract institutional capital and build credibility with limited partners. Born in 1949, Rubenstein earned his undergraduate degree from Duke University and his law degree from the University of Chicago. After working as a lawyer and in the White House during the Carter administration, he co-founded Carlyle in 1987 with William E. Conway Jr. and Daniel A. D'Aniello. At a time when private equity was largely opaque and driven by informal relationships, Rubenstein championed the adoption of standardised reporting metrics, including MOIC, IRR, and DPI, which became industry benchmarks. Rubenstein's advocacy for transparency and rigorous performance measurement transformed private equity from a relatively closed industry into one that could attract substantial institutional investment from pension funds, endowments, and sovereign wealth funds. His emphasis on clear, quantifiable metrics like MOIC enabled investors to compare fund performance objectively and hold managers accountable for value creation. Under his leadership, Carlyle grew to become one of the world's largest private equity firms, managing over £300 billion in assets, and his influence on industry standards remains profound. Rubenstein's belief that "you can't manage what you don't measure" became a guiding principle for the entire private equity sector, making MOIC and related metrics central to how the industry evaluates and communicates investment success. References 1. https://eqtgroup.com/en/thinq/Education/what-does-moic-mean-in-private-equity 2. https://www.fe.training/free-resources/private-equity/what-is-moic-in-private-equity/ 3. https://www.allvuesystems.com/resources/what-is-moic-in-private-equity/ 4. https://www.wallstreetprep.com/knowledge/moic-multiple-on-invested-capital/ 5. https://www.careerprinciples.com/resources/multiple-on-invested-capital-moic 6. https://carta.com/learn/private-funds/management/fund-performance/moic/ 7. https://calebblandlaw.com/blog/what-is-moic-in-the-context-of-private-equity/ 8. https://www.financealliance.io/multiple-on-invested-capital-moic/ 9. https://waveup.com/blog/understanding-moic-in-private-equity/

|

| |

| |

Quote: Max Planck - Nobel laureate"It is not the possession of truth, but the success which attends the seeking after it, that enriches the seeker and brings happiness to him." - Max Planck - Nobel laureateIn the chapter 'Is the external world real?' from his 1932 book Where Is Science Going? The Universe in the Light of Modern Physics, Max Planck articulates a timeless philosophy on scientific endeavour. This reflection emerges amid discussions on the nature of reality, the limits of human knowledge, and the relentless drive of scientific inquiry1,2. Planck, a Nobel laureate in Physics, emphasises that true fulfilment lies not in grasping absolute truth - an elusive goal - but in the very act of pursuit, where each discovery enriches the mind and spirit3. The Life and Legacy of Max PlanckBorn in 1858 in Kiel, Germany, Max Karl Ernst Ludwig Planck grew up in a scholarly family during a time of intellectual ferment. He studied physics, mathematics, and philosophy at the universities of Munich and Berlin, earning his doctorate in 1879 under Gustav Kirchhoff and Hermann von Helmholtz. Initially drawn to thermodynamics, Planck's career pivoted dramatically in 1900 when he resolved the 'ultraviolet catastrophe' in black-body radiation. By introducing the concept of energy quanta - discrete packets rather than continuous flow - he laid the cornerstone of quantum theory, revolutionising physics1,2. Planck received the Nobel Prize in Physics in 1918 for this groundbreaking work. Yet his life was marked by profound personal tragedy: both his first wife and two daughters died in childbirth, and during the Nazi era, his son was executed for alleged involvement in the plot to assassinate Hitler. Despite such losses, Planck remained a steadfast advocate for academic integrity, resisting Nazi interference in science while navigating the regime's pressures1. He directed the Kaiser Wilhelm Society (predecessor to the Max Planck Society) until 1945, embodying resilience and ethical commitment. The Context of the QuotePublished in 1932, Where Is Science Going? captures Planck's mature reflections on quantum mechanics' upheavals, causality, free will, and science's philosophical boundaries. The quote appears in a meditation on whether the external world exists independently of observation - a question echoing quantum uncertainties. Planck argues that science progresses through imaginative leaps and persistent effort, not flawless logic alone. He likens the researcher's path to a labyrinth, lit by occasional insights amid errors, underscoring that the 'success which attends the seeking' fuels progress and personal growth2,3. This era followed quantum theory's consolidation by figures like Einstein, Bohr, and Heisenberg, prompting Planck to defend classical intuitions while embracing modernity. Leading Theorists in the Pursuit of Truth in PhysicsPlanck's ideas resonate with pioneers who shaped the philosophy of scientific truth-seeking:

These theorists, connected through Planck's quantum revolution, illustrate that scientific truth emerges from collective, iterative striving - a theme central to the quote. Their legacies affirm Planck's wisdom: the journey itself illuminates and fulfils. References 1. https://www.goodreads.com/author/quotes/107032.Max_Planck 2. https://en.wikiquote.org/wiki/Max_Planck 3. https://www.deeplook.ir/wp-content/uploads/2016/09/Max_Planck_Where_Is_Science_Going.pdf 4. https://www.goodreads.com/quotes/131973-it-is-not-the-possession-of-truth-but-the-success 5. https://www.whatshouldireadnext.com/quotes/max-planck-it-is-not-the-possession 6. https://www.azquotes.com/author/11714-Max_Planck/tag/science 7. https://todayinsci.com/P/Planck_Max/PlanckMax-Quotations.htm

|

| |

| |

Quote: Jensen Huang - Nvidia CEO"If your job is the task, then you're very highly [likely] going to be disrupted." - Jensen Huang - Nvidia CEOJensen Huang's observation that roles defined primarily by task execution face significant disruption risk represents a critical inflection point in how we understand artificial intelligence's impact on the workforce. This statement, made during his recent appearance on the Lex Fridman Podcast, encapsulates a perspective that has become increasingly central to Huang's public messaging about AI's trajectory-one that distinguishes sharply between the displacement of routine work and the evolution of human capability. The Context of Huang's RemarksHuang's statement arrives at a moment of considerable market anxiety regarding AI's disruptive potential. In recent weeks, software stocks have experienced significant pressure, with investors expressing concerns that artificial intelligence tools-particularly large language models like Claude-could render traditional enterprise software platforms obsolete. The iShares Expanded Tech-Software Sector ETF has declined nearly 22% year-to-date, reflecting broader apprehension about technological displacement.1 This market sentiment provided the backdrop for Huang's clarification of what he views as a fundamental misunderstanding about AI's relationship to human work. What distinguishes Huang's framing is his deliberate parsing of different categories of employment. Rather than offering blanket reassurance that AI poses no threat to jobs, he instead articulates a more granular thesis: the vulnerability of any given role correlates directly with the degree to which that role can be reduced to discrete, repeatable tasks. This represents a more intellectually honest assessment than simple dismissal of disruption concerns, whilst simultaneously offering a pathway for workers and organisations to think strategically about adaptation. Huang's Broader Vision: AI as Tool User, Not Tool ReplacerThis statement must be understood within the context of Huang's larger argument about AI's fundamental nature. He has consistently maintained that markets have fundamentally miscalculated the threat AI poses to software companies, arguing instead that AI will function as an intelligent agent that uses existing software tools rather than replacing them.1 In his view, legacy enterprise platforms such as SAP and ServiceNow will continue to play vital roles because they "exist for a fundamentally good reason."1 AI, in this conception, becomes a layer of intelligence that sits atop existing infrastructure, amplifying human capability rather than rendering it redundant. However, Huang's acknowledgement that task-based roles face disruption introduces important nuance to this optimistic framing. He is not arguing that AI poses no displacement risk whatsoever. Rather, he is suggesting that the risk is not uniformly distributed across the labour market. Roles that consist primarily of executing defined procedures-whether in software development, data entry, customer service, or routine analysis-face genuine disruption. Conversely, roles that require judgment, creativity, strategic thinking, and human connection remain substantially more resilient. The Philosophical Underpinnings: Task Versus PurposeHuang's distinction between task-based and purpose-driven work echoes themes that have emerged across technology leadership in recent months. At Nvidia itself, Huang has been notably aggressive in pushing employees to adopt AI tools across their workflows, famously responding to reports of managers discouraging AI use with the rhetorical question: "Are you insane?"2 His directive that "every task that is possible to be automated with artificial intelligence to be automated" reflects a conviction that the path forward involves embracing AI augmentation rather than resisting it.2 Yet this aggressive automation stance coexists with Huang's assertion that Nvidia continues to hire aggressively-the company brought on "several thousand" employees in the most recent quarter and remains "probably still about 10,000 short" of its hiring targets.2 This apparent contradiction resolves when one understands Huang's underlying thesis: automation of tasks does not necessarily eliminate employment; rather, it transforms the nature of work. Workers freed from routine task execution can focus on higher-order problems, strategic initiatives, and creative endeavours that machines cannot yet replicate. The Broader Intellectual Landscape: Theorists of Technological DisruptionHuang's framework aligns with and draws from several established schools of thought regarding technological change and employment. The distinction between task-based and skill-based labour disruption has been central to economic analysis of automation for decades. David Autor, an economist at MIT, has extensively documented how technological change tends to polarise labour markets, eliminating routine middle-skill jobs whilst creating demand for both high-skill and low-skill positions. Autor's research suggests that the jobs most vulnerable to automation are precisely those that Huang identifies-roles defined by repetitive, rule-based task execution. Similarly, Erik Brynjolfsson and Andrew McAfee, in their influential work on the "second machine age," have argued that digital technologies create a bifurcated labour market. Their analysis suggests that whilst routine cognitive and manual tasks face displacement, roles requiring complex problem-solving, emotional intelligence, and creative synthesis remain resilient. This framework provides intellectual scaffolding for Huang's more granular assessment of disruption risk. The concept of "task-biased technological change" has also been explored by economists including Daron Acemoglu, who has examined how different technologies affect different categories of work. Acemoglu's research distinguishes between technologies that augment human capability and those that substitute for it-a distinction that maps closely onto Huang's characterisation of AI as a tool-using agent rather than a wholesale replacement for human labour. AI as Infrastructure: The Longer ViewHuang has recently articulated an even broader vision of AI's role in the economy, describing it as "no longer a single breakthrough or application" but rather "essential infrastructure."4 This framing positions AI alongside electricity, telecommunications, and the internet as foundational technologies that reshape economic activity across all sectors. From this perspective, the question is not whether AI will disrupt particular jobs-it almost certainly will-but rather how societies and organisations manage the transition and capture the productivity gains that AI enables. This infrastructure metaphor carries important implications. Just as the electrification of manufacturing in the early twentieth century eliminated certain categories of jobs whilst creating entirely new industries and employment categories, AI's integration into economic life will likely produce similar dynamics. The workers most at risk are those whose roles consist primarily of executing tasks that AI can perform more efficiently. Those whose work involves judgment, strategy, relationship-building, and creative problem-solving face a different calculus-one in which AI becomes a tool that amplifies their effectiveness rather than a replacement for their labour. The Nvidia Perspective: Pragmatism and Self-InterestIt is worth noting that Huang's analysis, whilst intellectually coherent, also reflects Nvidia's commercial interests. As the world's most valuable publicly traded company with a market capitalisation of $4.8 trillion, Nvidia has profound incentives to promote narratives that encourage AI adoption and investment.1 Huang's argument that AI will augment rather than replace human labour serves to assuage concerns that might otherwise dampen investment in AI infrastructure and applications. Nevertheless, the substance of his argument-that task-based roles face greater disruption risk than purpose-driven ones-appears robust across multiple analytical frameworks. The distinction he draws is not merely self-serving rhetoric but reflects genuine economic dynamics that scholars and analysts across the ideological spectrum have documented. Implications for Workers and OrganisationsHuang's framework offers practical guidance for both individuals and organisations navigating the AI transition. For workers, the implication is clear: roles that can be fully specified as a series of tasks face genuine disruption risk. Conversely, developing capabilities in areas that require judgment, creativity, and human connection-areas where AI remains substantially less capable-represents a rational career strategy. For organisations, the message is equally straightforward: the path to productivity gains and competitive advantage lies not in wholesale replacement of human workers but in strategic deployment of AI to handle routine tasks, thereby freeing human talent for higher-value work. This perspective also suggests that the anxiety currently gripping software stocks may be partially misplaced. If AI functions as a tool that uses existing software platforms rather than replacing them, then companies like ServiceNow and SAP may find their market positions strengthened rather than weakened by AI adoption. The software industry's role would evolve from direct human interaction to serving as the infrastructure layer upon which AI agents operate-a shift in function but not necessarily in fundamental value. The Unresolved TensionsDespite the coherence of Huang's framework, important questions remain unresolved. The transition period during which task-based jobs are displaced but new opportunities have not yet fully emerged could prove economically and socially disruptive. The pace of AI advancement may outstrip the ability of workers and educational systems to adapt. And the distribution of AI's productivity gains remains uncertain-whether those gains will be broadly shared or concentrated among capital owners and highly skilled workers remains an open question that Huang's analysis does not fully address. Furthermore, Huang's optimism about continued hiring at Nvidia and other technology companies may not generalise across the broader economy. Whilst Nvidia can afford to hire aggressively whilst automating tasks, smaller organisations with tighter margins may face different pressures. The aggregate labour market effects of widespread AI adoption remain genuinely uncertain, despite Huang's confident assertions. Conclusion: A Nuanced View of DisruptionHuang's statement that task-based roles face significant disruption risk whilst purpose-driven work remains resilient represents a more intellectually honest assessment of AI's impact than either blanket optimism or apocalyptic pessimism. It acknowledges genuine disruption whilst suggesting that the disruption is neither universal nor necessarily catastrophic. The framework aligns with established economic analysis of technological change and provides practical guidance for individuals and organisations seeking to navigate the AI transition strategically. Whether this optimistic vision of augmentation rather than replacement ultimately proves accurate will depend on policy choices, investment decisions, and the pace of technological development in the years ahead. References 2. https://fortune.com/2025/11/25/nvidia-jensen-huang-insane-to-not-use-ai-for-every-task-possible/

|

| |

| |

Term: Multiple Expansion"Multiple expansion refers to the increase in a company's valuation multiple at exit relative to the multiple paid at entry, holding operating performance constant." - Multiple ExpansionMultiple expansion occurs when an asset is purchased at one valuation multiple and subsequently sold at a higher valuation multiple, with the increase in multiple representing a source of investment returns independent of operational improvements.1,2 This concept forms a cornerstone of private equity investment strategy, particularly in leveraged buyouts (LBOs) and consolidation transactions. Core MechanicsAt its essence, multiple expansion is a form of arbitrage.2 A private equity firm acquires a company trading at a lower earnings multiple-for example, 7.0x EBITDA-and exits the investment at a higher multiple, such as 10.0x EBITDA.1 The difference between entry and exit multiples directly enhances returns to equity investors, independent of any improvement in the underlying business's financial performance. Consider a practical example: a financial sponsor acquires a company generating £10 million in EBITDA at 7.0x, resulting in a purchase enterprise value of £70 million. If the sponsor later sells the same company at 10.0x EBITDA (assuming EBITDA remains constant), the enterprise value rises to £100 million. The 3.0x multiple expansion-from 7.0x to 10.0x-creates £30 million in additional value, even though the underlying business has not improved operationally.1 Multiple Expansion in Consolidation StrategiesMultiple expansion proves particularly powerful in industry consolidation or "roll-up" strategies, where private equity firms acquire multiple smaller companies and combine them into a larger entity.3 Smaller companies typically command lower valuation multiples than larger competitors. For instance, a company with £500,000 to £1 million in EBITDA might trade at 4-7x EBITDA, whilst a company with £10 million in EBITDA might trade at 10x EBITDA.3 A concrete illustration demonstrates this principle: suppose a private equity firm acquires ten smaller companies, each generating £1 million in EBITDA and individually valued at 6x EBITDA (£6 million each). The total acquisition cost is £60 million. When consolidated into a single entity with £10 million in combined EBITDA, the aggregated company may command a 10x multiple, resulting in a £100 million valuation.3 The firm has created £40 million in value purely through multiple expansion, without requiring operational improvements. Intrinsic versus Market Multiple ExpansionMultiple expansion can be decomposed into two components: market-driven and intrinsic.5 Market multiple expansion reflects broader economic and industry conditions that cause valuation multiples to rise across the sector. Intrinsic multiple expansion, by contrast, results from management actions and operational improvements that cause a portfolio company to outperform its market.5 Intrinsic multiple expansion is achieved through strategies such as expanding product or service offerings, entering new geographic markets, reducing customer concentration, implementing improved pricing strategies, forming strategic partnerships, executing complementary acquisitions, and divesting non-core assets.5 For example, if a company's EBITDA multiple increases from 5.0x to 6.5x (+30%) whilst the market multiple increases from 8.0x to 10.0x (+25%), the company has generated positive intrinsic multiple expansion of approximately 5% relative to market performance.5 Mathematical FrameworkThe equity return contribution from multiple expansion can be expressed as: \text = \frac{\text - \text}{\text} \times 100\% In the earlier example with entry at 7.0x and exit at 10.0x: \text = \frac \times 100\% = 42.9\% This return is realised purely from the change in valuation multiple, independent of EBITDA growth or leverage paydown. Practical ConsiderationsWhilst multiple expansion offers significant return potential, several factors influence its realisation. Market conditions at exit substantially affect achievable multiples; economic downturns may compress multiples across industries, limiting expansion opportunities. Additionally, the initial purchase multiple reflects market perception of risk; companies purchased at low multiples often carry higher operational or market risk, which may persist through the holding period.2 Successful multiple expansion frequently requires integration and realisation of synergies. When combining acquired companies, private equity sponsors identify revenue synergies and cost-saving opportunities that enhance EBITDA, thereby supporting higher exit multiples.3 Without such operational improvements, achieving multiple expansion becomes dependent entirely on favourable market conditions at exit. Historical Context and Key Theorist: Henry KravisHenry Kravis, co-founder of Kohlberg Kravis Roberts & Co. (KKR), stands as the seminal figure in popularising and systematising multiple expansion as a core private equity value creation driver. Born in 1944, Kravis revolutionised the leveraged buyout industry during the 1980s and 1990s, establishing KKR as one of the world's most influential private equity firms. Kravis's relationship to multiple expansion stems from his pioneering work in LBO structuring and portfolio company management. During the 1980s, when KKR executed landmark transactions including the £24 billion acquisition of RJR Nabisco in 1989-then the largest LBO ever completed-Kravis demonstrated that substantial equity returns could be generated not merely through debt paydown or EBITDA growth, but through strategic acquisition of undervalued assets and their subsequent sale at market-appropriate multiples. Kravis's investment philosophy centred on identifying companies trading below intrinsic value, improving operational performance through active management, and exiting when market conditions permitted multiple expansion. This approach required deep industry expertise, disciplined capital allocation, and patience in holding periods-principles that became foundational to modern private equity practice. Born in Tulsa, Oklahoma, Kravis studied economics at Cornell University before earning an MBA from Columbia Business School. He joined Bear Stearns in 1969, where he worked alongside Jerome Kohlberg Jr., pioneering early LBO techniques. In 1976, Kravis and Kohlberg, along with George Roberts, established KKR, which grew to manage hundreds of billions in assets across multiple continents. Kravis's legacy extends beyond transaction execution; he articulated and formalised the theoretical framework through which private equity creates value. His emphasis on multiple expansion as a distinct return driver-separate from operational improvement and leverage paydown-provided clarity to investors and shaped how the industry measures and communicates value creation. Through KKR's portfolio company management practices, Kravis demonstrated that multiple expansion could be systematically pursued through industry consolidation, operational excellence, and strategic capital deployment. His work during the 1980s and 1990s established the template for modern private equity, wherein multiple expansion remains a primary objective alongside operational value creation. Kravis's influence persists in contemporary private equity strategy, particularly in consolidation plays and industry roll-ups, where the acquisition of smaller, lower-multiple businesses and their combination into larger, higher-multiple entities directly reflects principles he pioneered. References 1. https://www.wallstreetprep.com/knowledge/multiple-expansion/ 2. https://corporatefinanceinstitute.com/resources/valuation/multiple-expansion/ 3. https://hillviewps.com/the-concept-of-multiples-expansion-how-most-private-equity-works/ 4. https://multipleexpansion.com/2020/02/13/multiple-expansion-definition/ 5. https://auxiliamath.com/how-pe-managers-drive-intrinsic-multiple-expansion/ 6. https://www.youtube.com/watch?v=ngn7J61iRqA 7. https://www.divestopedia.com/definition/864/multiple-expansion/ 8. https://kailashconcepts.com/multiple-expansion-and-stock-performance/ 9. https://www.wallstreetoasis.com/resources/skills/valuation/multiple-expansion

|

| |

| |

Quote: Max Planck - Nobel laureate"A new scientific truth does not generally triumph by persuading its opponents and getting them to admit their errors, but rather by its opponents gradually dying out and giving way to a new generation that is raised on it." - Max Planck - Nobel laureateThe observation that scientific progress often requires generational change rather than individual conversion represents one of the most candid reflections on the nature of scientific advancement. This principle emerged from the lived experience of one of the twentieth century's most transformative physicists, whose own struggles to gain acceptance for revolutionary ideas shaped his understanding of how science actually evolves. Max Planck: The Reluctant Philosopher of ScienceMax Planck (1858-1947) was a German theoretical physicist whose contributions fundamentally altered our understanding of matter and energy.1 As the originator of quantum theory, Planck discovered that energy is emitted in discrete packets called quanta, a finding that would eventually underpin modern physics and enable the theoretical frameworks of Einstein and subsequent generations of scientists.3 Yet despite the revolutionary nature of his work, Planck's path to recognition was neither swift nor universally celebrated. Planck's reflection on scientific change emerged not from abstract philosophical speculation but from personal frustration. In his own words, recorded in his 1949 Scientific Autobiography, he expressed the pain of his experience: "It is one of the most painful experiences of my entire scientific life that I have but seldom…[succeeded] in gaining universal recognition for a new result, the truth of which I could demonstrate by a conclusive, albeit only theoretical proof."1 This candid admission reveals that Planck's principle was born from the gap between theoretical demonstration and practical acceptance-a gap he experienced acutely throughout his career. The Genesis and Context of the PrinciplePlanck articulated his observation in his Scientific Autobiography, published posthumously in 1949 (originally in German in 1948, the year after his death). The fuller formulation reads: "A new scientific truth does not triumph by convincing its opponents and making them see the light, but rather because its opponents eventually die, and a new generation grows up that is familiar with it."2 He elaborated further: "An important scientific innovation rarely makes its way by gradually winning over and converting its opponents: it rarely happens that Saul becomes Paul. What does happen is that its opponents gradually die out, and that the growing generation is familiarized with the ideas from the beginning: another instance of the fact that the future lies with the youth."2 What makes this observation remarkable is that Planck himself identified it as such-he called it "a remarkable fact."1 The principle addresses a fundamental tension in scientific practice: despite science's claim to objectivity and rationality, it remains a deeply human endeavour, subject to the psychological, social, and biological constraints that govern all human activity. Planck recognised that the triumph of new scientific truths depends not primarily on the logical force of evidence, but on the passage of time and the natural succession of generations. Interpreting Planck's Insight: Multiple DimensionsScholars have identified several complementary interpretations of Planck's principle, each illuminating different aspects of scientific change.1 One interpretation emphasises the age-related dimension: older scientists, having invested their careers and reputations in existing theoretical frameworks, may be psychologically and professionally resistant to paradigm shifts. Younger scientists, by contrast, encounter new ideas without the burden of prior commitment and can adopt them more readily. A second interpretation connects Planck's observation to Karl Popper's philosophy of science, particularly the concept of falsifiability. Where Popper emphasised rational refutation of theories, Planck's principle suggests that scientific change operates through a different mechanism-not conversion through logical argument, but replacement through generational succession.1 This distinction matters: it implies that scientific progress may be less rational and more evolutionary than philosophers of science have traditionally assumed. A third, perhaps most fundamental interpretation treats Planck's statement as a truism-an important but often overlooked truth about the biological reality of scientific practice.1 Science progresses not because individual minds are particularly malleable or rational, but because the human lifespan is finite. New theories need not convince everyone; they need only survive long enough for their proponents to train the next generation whilst their opponents eventually pass away. This interpretation emphasises that science, despite its aspirations to transcend human limitation, remains embedded in human biology and mortality. The Principle in Practice: Quantum Theory and BeyondPlanck's own experience with quantum theory exemplifies his principle. When he first proposed that energy is quantised-emitted in discrete packets rather than continuously-the idea met with considerable resistance from the established physics community. Even Albert Einstein, who would later extend quantum ideas, initially had reservations. Yet within a generation, quantum mechanics became the foundation of modern physics, not because Planck's opponents suddenly saw the light, but because a new generation of physicists-including Werner Heisenberg, Erwin Schrödinger, and Paul Dirac-grew up with quantum ideas as their intellectual inheritance. The principle has proven remarkably durable. In 1962, Thomas S. Kuhn cited Planck's insight in his landmark work The Structure of Scientific Revolutions, using it to support his argument that scientific progress occurs through paradigm shifts rather than gradual accumulation of knowledge.3 Economist Paul A. Samuelson popularised a more concise formulation-"Science progresses one funeral at a time"-which captured the principle's essence in memorable language.3 This phrasing, whilst somewhat macabre, underscores the principle's central claim: generational succession, not rational persuasion, drives scientific change. The Broader Theoretical LandscapePlanck's principle intersects with several major theoretical frameworks in the philosophy and sociology of science. Thomas Kuhn's concept of paradigm shifts directly engages with Planck's observation: paradigms change not because scientists within the old paradigm convert to the new one, but because the old paradigm's defenders eventually retire and die, whilst younger scientists adopt the new paradigm from the outset.3 This process explains why scientific revolutions often appear sudden and discontinuous rather than gradual. The principle also resonates with sociological studies of scientific knowledge. Rather than viewing science as a realm of pure rationality insulated from social and psychological factors, this perspective acknowledges that scientists are human beings embedded in social networks, professional hierarchies, and generational cohorts. Their acceptance or rejection of new ideas depends not only on evidence but on factors such as professional investment, social standing, and the timing of their entry into the field. Furthermore, Planck's insight challenges the traditional image of scientific progress as a steady march toward truth. Instead, it suggests a more complex picture: scientific change involves both rational evaluation of evidence and irrational human factors such as professional pride, institutional inertia, and the simple fact of mortality. This does not diminish science's achievements; rather, it acknowledges that science succeeds despite-and sometimes because of-its human dimensions. Limitations and NuancesWhilst Planck's principle captures something important about scientific change, it requires qualification. Not all scientific progress depends on generational succession. Sometimes individual scientists do change their minds when confronted with compelling evidence. Moreover, the principle may apply differently across disciplines: experimental sciences with clear empirical benchmarks may see faster conversion of individuals than theoretical fields where evidence is more ambiguous. Additionally, in contemporary science with rapid communication and large collaborative teams, the generational mechanism may operate differently than it did in Planck's era. The principle also risks oversimplifying the psychology of scientific belief. Scientists are not uniformly stubborn or open-minded; individual variation is substantial. Some older scientists prove remarkably receptive to new ideas, whilst some younger ones cling to outdated frameworks. Planck's statement describes a statistical tendency rather than an iron law. Legacy and Contemporary RelevancePlanck's principle remains strikingly relevant in contemporary science. Recent empirical research has suggested that the principle holds true: studies examining citation patterns and the adoption of new theories across scientific fields have found evidence that scientific change does indeed correlate with generational succession.5 This finding validates Planck's cynical but penetrating observation about the human side of science. The principle also offers perspective on current scientific controversies. When new theories encounter resistance from established researchers, Planck's insight suggests patience: the theory need not convince its opponents, only survive long enough to become the intellectual foundation of the next generation. This perspective neither dismisses the importance of evidence nor ignores the reality that scientific communities are composed of human beings with all their attendant limitations and biases. Ultimately, Planck's principle stands as a humble acknowledgement that science, despite its extraordinary achievements, remains a human activity. Its progress depends not only on the power of ideas and the weight of evidence, but on the passage of time, the succession of generations, and the simple biological fact that we all eventually die. In recognising this, Planck offered not a cynical dismissal of science but a more realistic and ultimately more profound understanding of how human knowledge actually advances. References 1. https://buyscience.wordpress.com/history-of-science/plancks-principle/ 2. https://en.wikipedia.org/wiki/Planck's_principle 3. https://quoteinvestigator.com/2017/09/25/progress/ 4. https://insertphilosophyhere.com/science-its-tricky/ 6. https://www.ophthalmologytimes.com/view/moving-forward-does-science-progress-one-funeral-at-a-time-

|

| |

| |

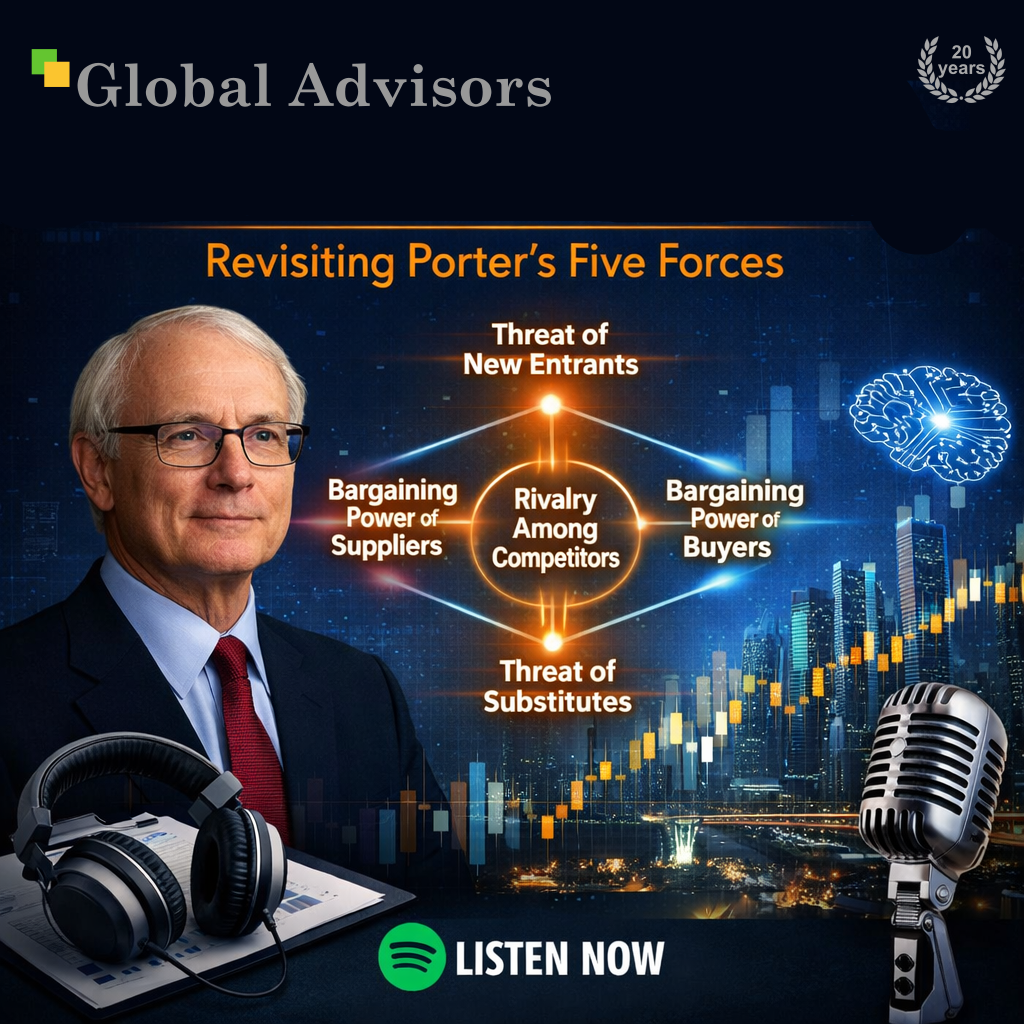

PODCAST: Quantifying Porter's Five Forces for CEOsIn this episode of the Global Advisors Spotify sequence, James and Lucy revisit Michael Porter’s Five Forces and reinterpret the framework for modern executives operating in volatile, digitally shaped markets. They trace its origins in industrial economics, examine its enduring value in understanding how profit is structurally distributed across industries, and address its limitations in a world shaped by platforms, network effects, data advantages, regulation, and AI. The discussion sets out the Global Advisors approach: move beyond qualitative strategy language, segment markets precisely, quantify structural forces with evidence, link analysis directly to capital allocation, and continuously recalibrate strategy as market conditions change. The result is a practical argument for more disciplined, data-backed, and ethically grounded strategic decision-making. Read more from the original article.

|

| |

| |

Term: Operational alpha"Operational alpha refers to the incremental value created through improvements in a portfolio company's operating performance, independent of financial leverage or changes in valuation multiples." - Operational alphaOperational alpha represents the incremental value created through improvements in a portfolio company's operating performance, independent of financial leverage or changes in valuation multiples.1 Rather than relying on financial engineering-such as debt restructuring or multiple expansion-operational alpha focuses on tangible, sustainable improvements to how businesses function and generate returns. Core Definition and ScopeAt its foundation, operational alpha encompasses the compounding effect of better decisions, faster execution, and scalable systems built on culture, structure, and technology.2 In wealth management and asset management contexts, operational alpha is essentially the value added by adopting more efficient processes and procedures, which is unrelated to the actual investment decision itself.3 This distinction is critical: operational alpha is about how well an organisation executes, not about market timing or investment selection alone. The concept extends beyond simple cost reduction. It encompasses risk mitigation and enhanced decision-making that investors achieve through streamlined systems and processes, ultimately reflecting an organisation's ability to withstand volatility and make sound decisions during market fluctuations.5 Evolution in Private EquityThe significance of operational alpha in private equity has grown substantially over the past decade. As capital flooded into private markets and competition intensified, the impact of financial engineering as a return driver began to diminish.1 With today's higher borrowing costs, compressed valuations, and more challenging deal environments, the trend has accelerated dramatically. Recent data underscores this shift. Research from Gain.pro, based on over 10,000 global private equity deals and exits, found that revenue growth accounted for 71% of total value creation at exit in 2024, compared to 64% the previous year.1 A 2024 McKinsey study of more than 100 private equity funds with post-2020 vintages discovered that firms focused on operational value-add achieved average internal rates of return that were 2-3 percentage points higher than their peers.1 Practical ImplementationModern operational alpha strategies involve several key components:

Specific value creation drivers include revenue expansion through new products, market entry, or acquisitions; margin improvement through lean manufacturing and digitalisation; and operational turnarounds involving leadership professionalisation and efficiency gains.1 Modern Evolution: Beyond Portfolio CompaniesContemporary understanding of operational alpha has expanded beyond improving individual portfolio companies. Today, it increasingly refers to turning the investment firm itself into a high-performance machine through better decisions, faster execution, and scalable systems built on strong foundations of culture, structure, and technology.6 This represents a fundamental shift from viewing operational alpha as solely a portfolio company improvement tool to recognising it as a competitive advantage for the investment firm itself. Leading firms like LaSalle Investment Management, Affinius Capital, and Harrison Street are embedding ownership mindsets, feedback loops, agile structures, and integrated platforms to reduce friction, empower people, and future-proof operations.2 Real-World ImpactThe tangible outcomes of operational alpha strategies are substantial. One private equity firm supported a global education provider in scaling through acquisitions, centralising operations, building digital infrastructure, and expanding product offerings such as personalised learning tools. Today, that business is a multibillion-pound leader in its sector.1 In another example, a sponsor led a full-scale operational turnaround of a United States manufacturing company, professionalising the leadership team, implementing lean practices, and expanding capacity, resulting in more than 3.5 times EBITDA growth and significantly stronger margins.1 Key Theorist: Steffen PaulsSteffen Pauls has emerged as a leading voice articulating the strategic importance of operational alpha in contemporary private equity. Currently serving as chief executive officer of Moonfare, a private market investment platform, Pauls brings extensive practical experience in value creation and operational excellence. Pauls' career trajectory demonstrates deep engagement with operational value creation. He previously served on the value creation team at Kohlberg Kravis Roberts & Co. (KKR), one of the world's largest private equity firms, where he gained first-hand experience in how deeply embedded and professionalised operational functions have become within leading sponsors. This background positioned him uniquely to observe and articulate the fundamental shift occurring within private equity. In a 2024 letter to the Financial Times, Pauls argued that private equity is fundamentally changing, with higher interest rates eroding the role of financial engineering in the traditional buyout model.1 He contends that managers must return to the basics of corporate craftsmanship by supporting portfolio companies in their efforts to increase revenue, margins, or ideally both. This may include rolling out new products, fine-tuning business models, expanding into new markets, or optimising costs through lean manufacturing and digitalisation.1 Pauls' perspective is grounded in observable market trends rather than theoretical speculation. He notes that the move away from financial engineering is anticipated to accelerate further, with operational improvements identified as the primary return driver for deals expected to exit over the coming years.1 His work at Moonfare, engaging closely with operators behind many platform funds, continues to inform his understanding of how operational excellence translates into differentiated investment performance. Pauls represents a new generation of private equity leaders who recognise that sustainable competitive advantage comes not from financial engineering or market timing, but from the disciplined, hands-on operational improvement of portfolio companies. His articulation of operational alpha as the future of private equity has influenced industry thinking and practice, particularly as traditional leverage-based return drivers have diminished in effectiveness. References 1. https://www.moonfare.com/blog/operational-alpha-private-equity 2. https://www.junipersquare.com/blog/operational-alpha 5. https://www.ai-cio.com/news/operational-alpha-can-provide-a-crucial-competitive-advantage/ 6. https://www.propertychronicle.com/what-is-operational-alpha-a-guide-for-modern-gps/ 9. https://jfdi.info/wp-content/uploads/2025/07/Achieving-Operational-Alpha-in-Private-Equity.pdf

|

| |

| |

Quote: Andrej Karpathy - AI Guru, Former head of Tesla AI"I think the industry has to reconfigure in so many ways. The customer is not the human anymore. It's agents acting on behalf of humans, and this refactoring will probably be substantial." - Andrej Karpathy - AI Guru, Former head of Tesla AIA Pivotal Shift in the AI LandscapeAndrej Karpathy, former Director of AI at Tesla and founding team member at OpenAI, stated: "I think the industry has to reconfigure in so many ways. The customer is not the human anymore. It's agents acting on behalf of humans, and this refactoring will probably be substantial." This quote from a March 20, 2026, discussion on No Priors podcast highlights the transformative impact of AI agents on software development and industry infrastructure. Context of Karpathy's VisionKarpathy describes a rapid evolution in programming, where AI agents have become reliable since late 2025. He notes that coding agents "basically didn't work before December and basically work since," exhibiting higher quality, long-term coherence, and tenacity1,2,3,5. Traditional coding-typing code into an editor-is giving way to delegating tasks in English, managing parallel agent workflows, and reviewing outputs1,2. For example, Karpathy built a video analysis dashboard for home cameras in 30 minutes using an AI agent that handled errors and research autonomously2. He emphasizes this as "delegation," not magic, requiring high-level direction and taste2. Implications for Programming and Industry

Karpathy predicts 2026 as a "high energy" year of industry adaptation, with LLMs surging ahead of integrations and workflows3. Professionals must build for agent autonomy, echoing early frameworks like BabyAGI1. Karpathy's CredentialsA leading AI expert, Karpathy advanced deep learning at OpenAI, led Tesla's Autopilot vision team, and coined "vibe coding." His insights reflect real-world shifts observed in early 20261,2. References 2. https://www.businessinsider.com/andrej-karpathy-programming-unrecognizable-ai-2026-2 3. https://paweldubiel.com/42l1%E2%81%9D--Andrej-Karpathy-quote-26-Jan-2026- 4. https://www.youtube.com/watch?v=HSshsQCEPC0 5. https://simonwillison.net/2026/Feb/26/andrej-karpathy/

|

| |

| |

|

| |

| |

!["If your job is the task, then you’re very highly [likely] going to be disrupted." - Quote: Jensen Huang - Nvidia CEO](https://globaladvisors.biz/wp-content/uploads/2026/03/20260324_09h30_GlobalAdvisors_Marketing_Quote_JensenHuang_GAQ.png)