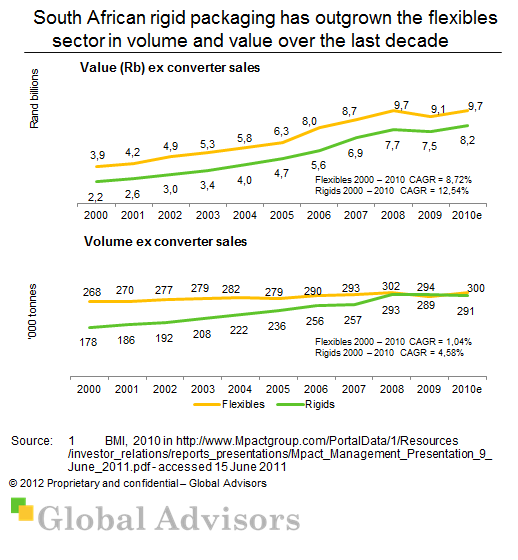

Rigid plastic packaging showed 5,4% p.a. volume growth and 14,0% p.a. value growth from 2000 to 2010E. This growth is expected to continue with the shift glass and cans (metal) to PET plastic.

Rigids are better suited to local manufacture due to their lower packing density than flexibles.